At some point the output itself was no longer enough. I needed the tool to say where a result came from and how it had been shaped.

Once A11Y Cat had multiple detection sources and multiple issue classes, it also had a new trust problem: even if the product showed the right result, could someone tell why it existed?

That is where provenance enters the repo in a serious way.

April 12 and 13 add source attribution, overlap-aware composition, capability-class source attribution, export provenance metadata, omission rules, and comparison provenance. The README starts describing findings not just by severity or issue type, but by source: axe, local deterministic, heuristic, manual prompt, runtime diagnostic, AI-assisted. It also introduces capability-class attribution like “Pure axe,” “Product deterministic,” and “Manual review guidance.”

That is a lot of metadata to expose, and there is a reason for it. Without provenance, the tool is asking users to trust an aggregate result without enough context. With provenance, the tool can say: this came from axe, this came from our own logic, this was heuristic, this was manual guidance, this was AI-assisted, this was kept while another overlapping source was suppressed.

I think the overlap-aware composition model is especially interesting. Once the product is combining engine output with product-specific checks, duplicate or overlapping findings become a real UI problem. But if you suppress one source, you also risk hiding evidence about how the result was derived. So the repo starts tracking suppressed contributor provenance instead of just deleting the less convenient duplicate.

That is not the kind of work you do if you only care about prettier cards. That is the kind of work you do when you realise people may need to understand whether a finding is “axe saw it,” “the product inferred it,” or “this is a manual review prompt built from partial signals.”

The export contract changes show the same mindset. Comparison provenance should be explicit or omitted, not guessed. Export sections should carry omission semantics when evidence is incomplete. That is a very particular kind of honesty. The product is not just showing more metadata because metadata looks advanced. It is trying to stop downstream consumers from overreading what the tool actually knows.

Visual evidence

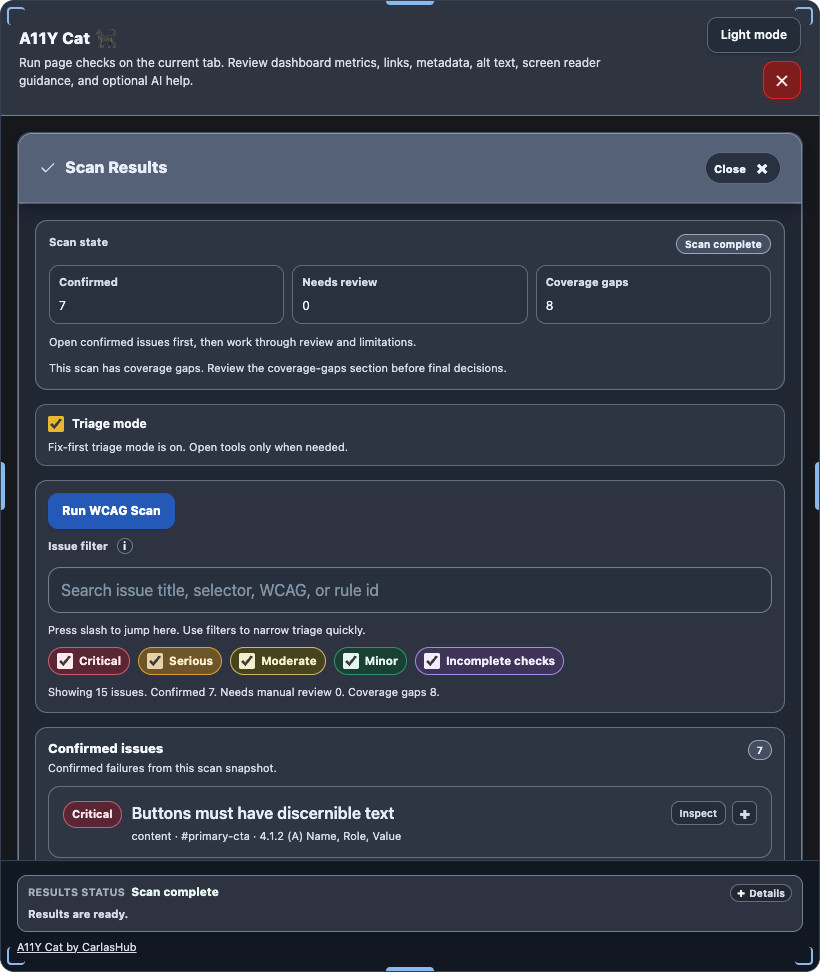

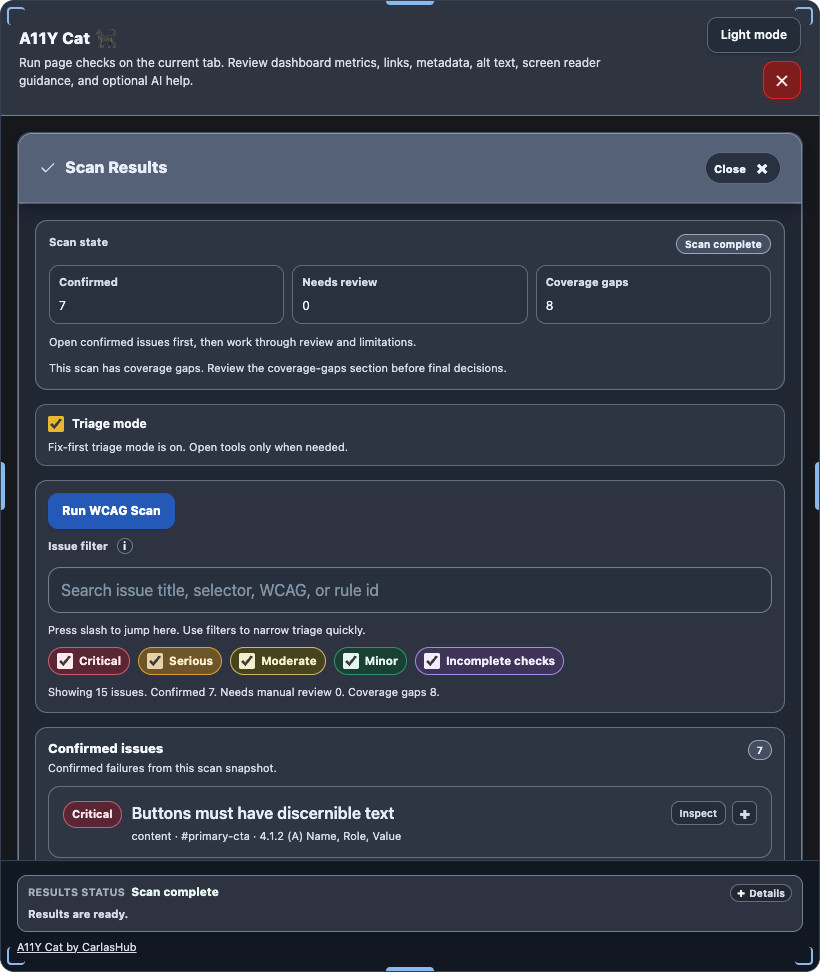

There is no reliable repo-held screenshot that isolates provenance labels or export provenance sections cleanly at the exact points discussed here. The closest visible evidence is the grouped extension scan surface where source-aware rendering eventually feeds into the UI:

What I was really learning here

I was learning that once a tool combines multiple sources and multiple confidence levels, “a finding exists” is no longer enough information. Provenance becomes part of the meaning.

Evidence

- Commits:

48e04b1– explicit scan source attribution and overlap-aware compositione3f5e38– capability-class source attribution across runtime, UI, and exports4dcea36– export contract hardened with provenance sections and omission rulesa2960b0– export provenance metadata and strict baseline attribution

- Files:

../../src/runtime/modules/source-attribution.js../../src/runtime/modules/exports.js../../src/runtime/modules/result-shaping.js../../README.md../../tests/node/runtime-modules.test.js