The later AI work feels much less like “let the model help everywhere” and much more like “if AI is here, it needs to fit inside the product’s boundaries.”

After the early Groq and local assistant phases, the AI story changes again with the extension.

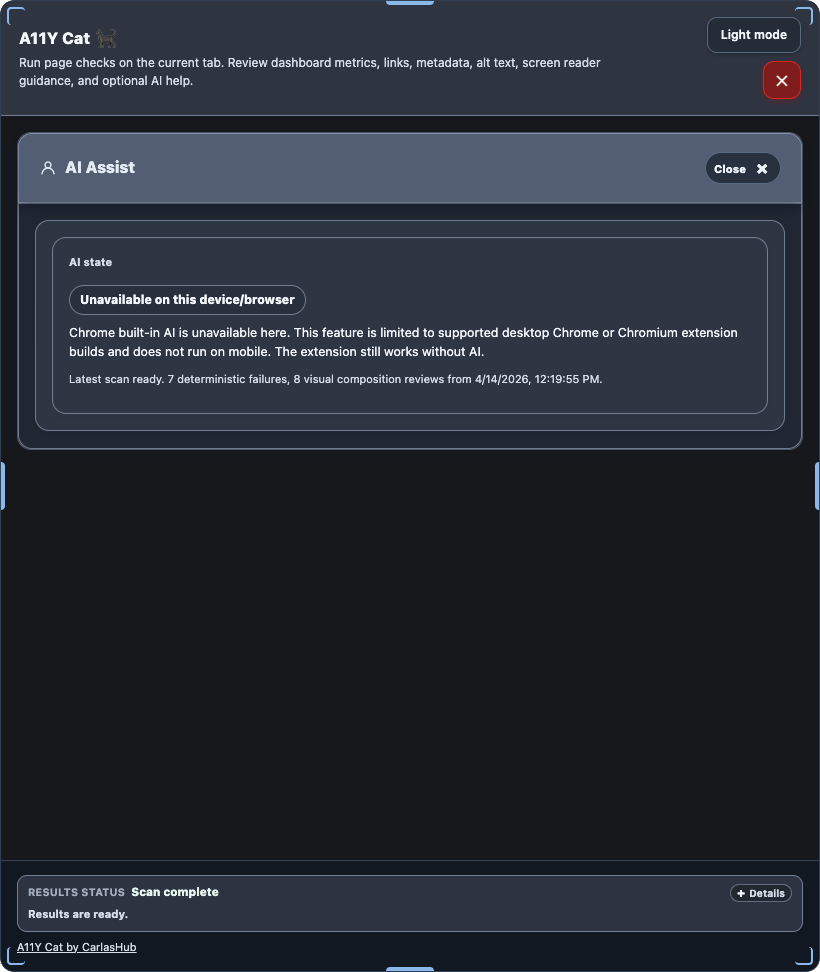

The new path is built around Chrome’s built-in Prompt API or LanguageModel API. More importantly, the repo treats that path as optional from the start. The extension still works without AI. The deterministic scan stays primary. The AI surface only appears when the current environment can actually support it. If the model is unavailable, downloading, or unsupported, the product is supposed to hide or disable the right things instead of leaving dead controls around.

That is a much more mature AI integration pattern than the early experimental phase.

I think the service-worker architecture matters here. The injected page UI does not talk straight to the model. It sends bounded requests into the extension context, where the service worker checks availability and runs the prompt calls. That keeps the AI path closer to the extension’s trust and permission boundary instead of letting it sprawl across the page context.

The UI shape also tells the story. The extension AI Assist surface is not free chat. It is a state summary plus unlocked actions, split between page-level actions and issue-specific actions, with a separate result area. That is a very workflow-oriented design. It feels like the builder had already learned that AI is safer and more useful when it is attached to a known task and a known data shape.

The tests reinforce that. The extension AI suite covers unavailable state, download-required state, and bounded output rendering with mocked prompt responses. That is important. The project is not only testing happy-path prose generation. It is testing whether the product behaves honestly when the AI path is missing or partial.

I also like that the docs keep repeating the limits. Device-dependent. Optional. Desktop only. Does not replace deterministic findings. Does not decide compliance. Can require model download. Those lines might sound repetitive, but I think they are exactly what this phase needed.

What changed most here was not the presence of AI. It was the confidence level of the surrounding architecture. The project stopped treating AI as a clever layer and started treating it as a bounded capability with explicit availability and trust limits.

Visual evidence

The extension AI Assist surface is directly represented in the repo’s store/reviewer screenshot set:

What I was really learning here

I was learning that AI integration gets much easier to live with once it becomes optional, task-bounded, and availability-aware. The safer move was not “more AI.” It was tighter placement and clearer limits.

Evidence

- Commits:

a5bba65– extension AI UI and release hardeningfad7316– extension AI runtime and UI state fixed984ae2a– extension AI surfaces hardened5df091b– Google Prompt API compatibility hardened

- Files:

../../extension/content/prompt-ai-service.js../../extension/background/service-worker.js../../extension/README.md../../tests/playwright/extension-ai.spec.js../../README.md