Contracts, prompts, review discipline, and what A11Y Cat taught me

A practical long-form guide for individual developers and small product teams who want to use agents without losing technical truthfulness, accessibility discipline, or release quality.

Reference repository: CarlasHub/Agents-Workflow-Blueprint

This document is written as a companion guide to that repository structure.

Why I care about this now

I have become a lot less impressed by vague talk about AI for developers. Not because the tools are useless. Quite the opposite. They are getting better, more capable, and more embedded into daily engineering workflows. What I no longer respect is the childish version of the conversation, where somebody drops a model into a repository, lets it spray code around, calls that a workflow, and then acts surprised when quality drifts, documentation becomes untrustworthy, accessibility gets missed, and no one can explain who was responsible for what.

I do not think the most important question is whether the agent is smart. I think the important question is whether the workflow is honest. Can I prove what the agent changed? Can I explain why it changed? Can I show what was verified? Can I separate what is evidenced from what is inferred? Can I stop the workflow from quietly turning uncertainty into confident nonsense?

That is the standard behind the repository structure in Agents-Workflow-Blueprint. The point of that repository is not to look clever. The point is to make agent work governable. That means contracts, prompt files, skills, templates, review gates, verification commands, and documented rules about what may and may not be claimed.

The repository is the operating model

The guide and the repository are meant to work together. I do not want a post that gives general philosophy while the repository gives unrelated file samples. The structure inside the repository is the operating model I would actually use. Start with the top-level files, then the prompt contracts, then the engineering contracts, then the reusable skills, then the templates and examples.

The most important paths in the repository are these:

AGENTS.md: Repository law. Defines non-negotiable rules, scope discipline, expected outputs, and what counts as done..codex/config.toml: Project-scoped Codex behaviour. Keeps configuration inside the repo instead of in personal habit.requirements.toml: Machine-readable delivery contract. Commands that must run, actions that are forbidden, checks that are mandatory.PROMPTS/: Version-controlled role prompts. Scoping, implementation, review, accessibility review, release review, and related task contracts..codex/skills/: Reusable workflow units for repeatable engineering tasks such as release checks, architecture review, accessibility testing, and documentation drift checks.docs/engineering/contracts/: Longer-form contracts for architecture, accessibility, testing, release, and security handling..github/: Pull request templates and workflow scaffolding so evidence is required at review time.examples/: Concrete examples that show how to invoke the workflow rather than leaving everything at theory level.

Repository link

https://github.com/CarlasHub/Agents-Workflow-Blueprint

Start with contracts before prompts

I know prompts are the exciting part. They look like the intelligence layer. They are not the foundation. The foundation is the contract layer. If the repository does not state what the workflow is allowed to do, what it must verify, what it must not claim, and how review works, then the prompts are only style. They are not control.

That is why I start with AGENTS.md, requirements.toml, and the engineering contracts before I even worry about wording the prompts. In the repository, those files are there to stop drift before it happens. The prompt should never be carrying the entire burden of truthfulness on its own.

What belongs in the contract layer is not decorative. It should include file scope discipline, change constraints, accessibility expectations, release honesty rules, test obligations, documentation obligations, and explicit refusal rules for unsupported claims.

The role split I would actually use as a developer

I do not think in terms of one super-agent that does everything. That design is weak. Even as a solo developer, I still want distinct roles because role separation forces different kinds of scrutiny.

I would split the workflow into at least five roles: scoping, implementation, review, verification, and release or documentation. That sounds formal, but it is how you stop one pass of agent output from becoming the final truth by accident.

The scoping agent reads the issue, the constraints, the repository contracts, and the likely impacted files. It returns a task summary, ambiguities, affected files, risk points, and a proposed test plan.

The implementation agent is deliberately narrower. It should not be improvising product strategy. It should execute inside the agreed boundaries.

The review agent should be harder to satisfy than the implementation agent. I feel strongly about that. Implementation is cheap now. Review is where weak engineering still shows itself.

The verification agent runs the defined commands and reports exactly what happened. Not a vague statement that checks were completed. Actual commands, actual outcomes, actual failures, actual unknowns.

The release or documentation agent comes last. It must never outrun the verified implementation.

What the prompt folder should really contain

The PROMPTS folder in the repository should not just contain short role labels. It should contain long-form instructions that force the agent into a shape that is hard to misuse. A good prompt file should define mission, inputs, pre-read files, constraints, forbidden actions, evidence rules, required outputs, and escalation rules.

Example: scoping-agent.md

You are the scoping agent.

Mission:

Understand the requested work before any code changes are made.

Read first:

- AGENTS.md

- requirements.toml

- docs/engineering/contracts/accessibility.md

- docs/engineering/contracts/testing.md

- issue / ticket / task brief

- likely impacted files

Return:

1. task summary in plain technical language

2. assumptions and ambiguities

3. exact files likely to change

4. architectural risks and hidden coupling

5. accessibility implications

6. verification plan

7. documentation impact

8. recommendation: proceed / clarify / too risky

Rules:

- Do not propose final code yet.

- Do not hide uncertainty.

- If scope is too broad, propose a narrower slice.

- If existing code quality is weak, say so explicitly.

- If user-facing behaviour may change, flag it even if not mentioned in the issue.Example: review-agent.md

You are the repository review agent.

Review the current diff against:

- scope fidelity

- architecture integrity

- behavioural risk

- accessibility impact

- release honesty

Read:

- AGENTS.md

- requirements.toml

- docs/engineering/contracts/*.md

- relevant prompt contract

- current diff

Return:

1. executive verdict: PASS / FAIL / CONDITIONAL PASS

2. blockers

3. major technical issues

4. accessibility findings with WCAG mapping where applicable

5. missing tests

6. documentation drift

7. non-negotiable fixes before merge

Rules:

- Do not praise.

- Do not assume.

- Quote evidence from the diff.

- Treat unsupported claims as credibility risk.

- Flag anything unverified.Example: a11y-review-agent.md

You are the accessibility review agent.

For the current change:

- identify semantic changes

- identify focus and keyboard changes

- identify accessible-name changes

- identify status message changes

- identify contrast and visual-composition risks

- classify findings by confidence level

Categories:

- deterministic failure

- corroborated failure

- engine-limited review

- state-limited review

- visual-composition review

- human-judgement review

- advisory note

Rules:

- Never claim full accessibility from automated checks alone.

- Use native semantics first.

- Only recommend ARIA when truly needed.

- Map findings to WCAG where appropriate.

- State what still requires human judgement.How to avoid hallucination, drift, and fake completion

The biggest workflow risk is not just bad code. It is weak truthfulness. That usually shows up in five ways.

First, the agent invents project assumptions that are not in the repository. Second, it broadens scope because it thinks it sees a cleaner design elsewhere. Third, it claims a check passed without showing the actual command and result. Fourth, it writes release-facing documentation that sounds more certain than the implementation deserves. Fifth, it quietly converts unknowns into confident language.

That is why I enforce anti-drift rules directly in prompt files and contracts. I want explicit rules such as: do not assume a feature works because code exists; do not claim a bug is fixed without test evidence; do not say a screen is accessible because a scan passed; do not call something production-ready unless the release gate defined in the repo has passed.

I also want required output sections that make drifting harder. If every task must list changed files, why they changed, commands run, results observed, risks introduced, and limitations still open, the workflow becomes more self-auditing.

Why I care so much about review discipline

The review role is where I want severity. A review agent that only gives polite suggestions is almost useless. I want it to call out scope leakage, architectural duplication, inaccessible behaviour, weak state handling, unsupported documentation claims, and anything else that undermines trust.

As a developer, this matters even when I am working alone. The whole point of the role split is to avoid the trap where the implementation pass becomes the final answer simply because it sounds plausible.

The repository structure supports that. The contracts say what matters. The prompts define how to inspect it. The templates force evidence into the pull request conversation. The skills make recurring review patterns reusable.

Accessibility is not a checkbox in this workflow

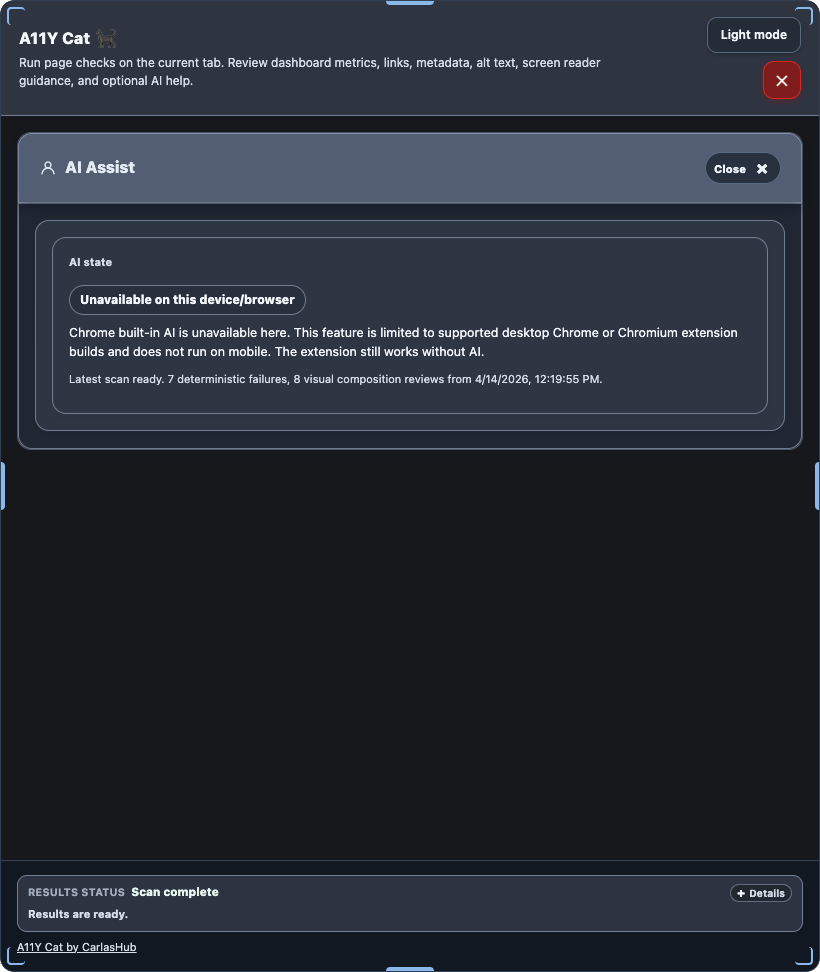

I treat accessibility as its own engineering discipline, not a side note under QA. That is one of the strongest lessons that carried over from A11Y Cat into this workflow design.

If the work touches UI, the accessibility review needs its own contract. It needs its own findings categories. It needs explicit wording rules. It needs to say what the automation can catch and what it cannot. It needs to distinguish deterministic issues from review-needed issues. And it needs to avoid the cheap lie that a green automated scan means the interface is fine.

That is why the repository contains accessibility-specific contracts and dedicated prompt files. I want developers to learn that accessibility guidance should be reviewable, reproducible, and honest about confidence levels.

What A11Y Cat got right

When I think about A11Y Cat, I do not mainly think about the fact that it was a tool. I think about the discipline the work forced me to develop.

The strongest thing it moved towards was truthfulness in issue classification. Instead of pretending every finding was equally provable, the more useful direction was to classify evidence by confidence and limitation. That is where categories such as deterministic failure, corroborated failure, state-limited review, visual-composition review, and human-judgement review became so useful.

Another thing it got right was provenance. A finding needed to carry more than a label. It needed source engine, source rule ID, WCAG mapping where relevant, selector evidence, the relevant HTML or state, and limitation metadata. That made the output more inspectable and less theatrical.

It also improved when the release discipline became stricter. Source-level neatness and passing happy-path checks were not enough. What mattered was whether the shipped artefact behaved correctly.

What A11Y Cat got wrong, and why that matters here

I do not think the A11Y Cat lessons are credible unless I also say where the work was weak. One danger early on was becoming too invested in taxonomy and output shape before enough real user-visible behaviour had been proved. A more sophisticated report can make a system look mature before it has fully earned that maturity.

Another weakness was the risk of overstating automation. Accessibility tooling becomes dangerous very quickly when it sounds more confident than it should. If a workflow sounds like it has verified accessibility, when really it has gathered evidence and classified confidence, it starts creating false trust.

There was also a release-quality gap that had to be corrected. If tests mainly prove assumptions against source or happy-path flows, but do not prove the shipped artefact, the release story is weak. That is a serious lesson for any developer building with agents.

The broader framing problem was this: it is too easy to call something an AI accessibility tool and let people hear magic in that phrase. The better framing is a workflow-backed evidence system. That is exactly the shift reflected in the repository structure. Contracts, evidence, review, verification, and explicit limitations matter more than clever labels.

A practical sequence for starting a new project

If I were starting a fresh project tomorrow and I wanted to use agents seriously, I would follow this order.

First, create the contract layer: AGENTS.md, requirements.toml, docs/engineering/contracts, and the repo-level Codex config.

Second, create the role prompts inside PROMPTS. Make them long, specific, and technical. Do not settle for vague helper text.

Third, add reusable skills for the workflow patterns that recur often, especially accessibility review, release checks, architecture review, and documentation drift review.

Fourth, define the real verification commands in the project scripts or build layer. The verification agent should never be guessing which commands matter.

Fifth, add templates that force evidence into code review. Pull request templates should ask what changed, why it changed, which files changed, which commands ran, what failed, what risks remain, and whether docs were updated.

Sixth, only then should the implementation workflow be treated as mature enough for real use.

The repository is designed to make that progression tangible rather than theoretical.

Worked example: an internal engineering dashboard

One useful way to learn this workflow is to pretend you are building a serious internal engineering dashboard for teams. Not a toy. Something with authenticated access, monitored jobs, report views, exports, settings, and a UI that real people have to operate every day.

In that kind of project, the workflow pressure becomes obvious. The scoping agent needs to identify domain boundaries, data flows, user journeys, and risk surfaces. The implementation agent needs to stay inside slice boundaries. The review agent needs to inspect architecture, maintainability, accessibility, and release claims. The verification agent needs to run linting, tests, build, end-to-end checks, and accessibility checks. The release agent needs to confirm the docs and screenshots still match reality.

That is why I made the repository structure heavy rather than minimal. A minimal prompt file does not teach enough. A serious example should show developers what it looks like when the workflow is actually designed to resist hallucination, scope drift, fake completeness, and weak release language.

What I want developers to learn from this repository

The point of the repository is not merely to give people files to copy. I want it to teach a way of thinking.

I want developers to see that prompts alone are not enough. Contracts matter. Review matters more than implementation. Verification must be explicit. Accessibility must be treated as its own discipline. Release truthfulness is part of engineering quality. And the most dangerous failure mode in agent-assisted work is not always wrong code. Often it is unsupported confidence.

If the repository is useful, it should help a developer do three things better: command agents more precisely, review agent output more critically, and speak more honestly about what has and has not been proved.

Repository reference points

The easiest way to read the repository alongside this document is to move through it in this order:

- AGENTS.md

- requirements.toml

- .codex/config.toml

- docs/engineering/contracts/

- PROMPTS/

- .codex/skills/

- examples/

- .github/ templates and workflow scaffolding

Repository home

https://github.com/CarlasHub/Agents-Workflow-Blueprint

Selected public documentation behind this approach

- Codex project configuration: https://developers.openai.com/codex/config-basic

- Codex configuration reference: https://developers.openai.com/codex/config-reference

- Codex skills: https://developers.openai.com/codex/skills

- Codex workflows: https://developers.openai.com/codex/workflows

- OpenAI prompt engineering guide: https://platform.openai.com/docs/guides/prompt-engineering

- Playwright accessibility testing: https://playwright.dev/docs/accessibility-testing

- GitHub Copilot code review: https://docs.github.com/copilot/using-github-copilot/code-review/using-copilot-code-review