By the time I came back to AI in April, I seemed much less interested in “chat” and much more interested in keeping the tool from saying the wrong thing.

The old local assistant path in A11Y Cat is one of the most revealing bits of the repo because it shows a very specific kind of restraint.

By April 7 the project has an assistant backend, but it is not a general chatbot bolted onto the panel. It is a separate local service with a token, an allowlist, structured inputs, bounded actions, grounding files, and output contracts. That is a very different shape from “just ask the model something.”

I think this happened because I had already seen enough of the tool to know where free-form AI would get dangerous. Accessibility tooling is full of places where a confident sentence can outrun the evidence. If the assistant starts improvising on issue counts, selectors, manual validation status, or what the tool has actually checked, it stops being helpful very quickly.

So the backend architecture forces the opposite shape. The bookmarklet UI sends structured scan, issue, and ticket payloads. The backend validates them. It picks action-specific guidance from repo docs. It requires action-specific response schemas. It checks key fields against the original request. And it explicitly keeps manualValidationStatus locked to “required_not_performed” in the output contracts. That is not accidental. That is somebody trying to stop the assistant from sounding more certain than the tool deserves.

Even the supported actions are narrow: explain one issue, draft one ticket, generate manual review steps, summarize the latest scan, summarize confirmed issues only. No free-form “what should I do about accessibility?” chat. No pretending the assistant is a compliance oracle. The docs are explicit about that.

I really respect this part of the repo because it shows the builder learning from the problem instead of from AI fashion. The tool needed help with explanation and handoff, but it also needed guardrails around what explanation was allowed to claim.

Later, the public story moves away from this localhost Ollama path and toward optional on-device AI inside the extension. But the old local assistant does not disappear as if it never existed. It stays documented as an archive in LOCAL_ASSISTANT.md. I like that too. It makes the project history easier to trust. Not every direction becomes the forever direction, but the repo does not pretend those paths were not real.

This assistant backend is also one of the clearest places where the project starts treating the product problem and the engineering constraints as the same job. The user-facing problem is “explain and hand off results more easily.” The engineering answer is “only let the assistant speak in structures that stay tied to real tool data.”

That is a much better lesson than just “add AI.”

Visual evidence

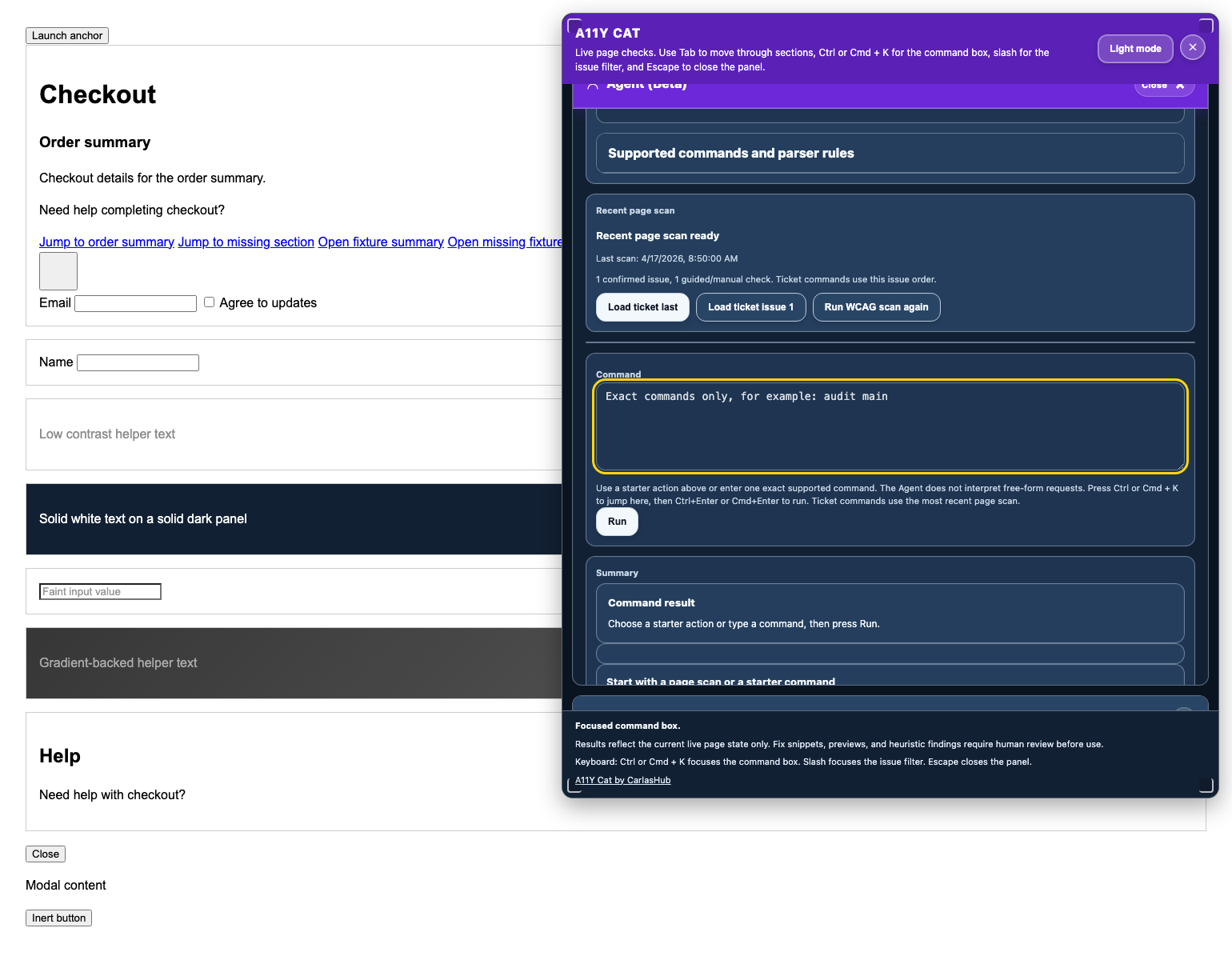

There is no reliable direct screenshot of the localhost backend itself because it is a service boundary, not a standalone UI. But I could reconstruct the April historical panel state with the agent section open from the same 8b09635 era when the backend entered the public repo:

What I was really learning here

I was learning that if I wanted AI help without turning the tool into guesswork, I needed to constrain the assistant much harder than I first thought. The useful part was not open chat. It was bounded explanation tied back to real scan data.

Evidence

- Commits:

8b09635– local assistant backend introduced into the public repo27afee8– assistant backend and release gate evolved further4425e4a– truth-model-only active flows and canonical export bridge enforced

- Files:

../../LOCAL_ASSISTANT.md../../assistant-backend/app.js../../assistant-backend/output-contracts.js../../assistant-backend/grounding.js../../assistant-backend/project-context.md../../tests/node/assistant-backend.test.js

- Visuals:

phase-03-reconstructed-verified-bookmarklet/panel-agent-open.png– direct historical screenshot captured from a checked-out worktree at8b09635

- Inference:

- The claim that this architecture was a reaction against overconfident AI is supported by the contracts and docs, but the motivation is still an inference from those controls.